MCP vs Traditional APIs: Why AI Needs a New Protocol Layer

Model Context Protocol (MCP) is emerging as the standard way AI models interact with tools and data sources. But how is it different from REST APIs and function calling? A side-by-side comparison of architecture, capability discovery, and why a protocol layer matters for agentic AI.

MCP vs Traditional APIs: Why AI Needs a New Protocol Layer

REST APIs changed how software systems talk to each other. Every modern application is built on them. But when AI models need to interact with external tools and data sources, REST APIs create a problem that gets worse with every new integration.

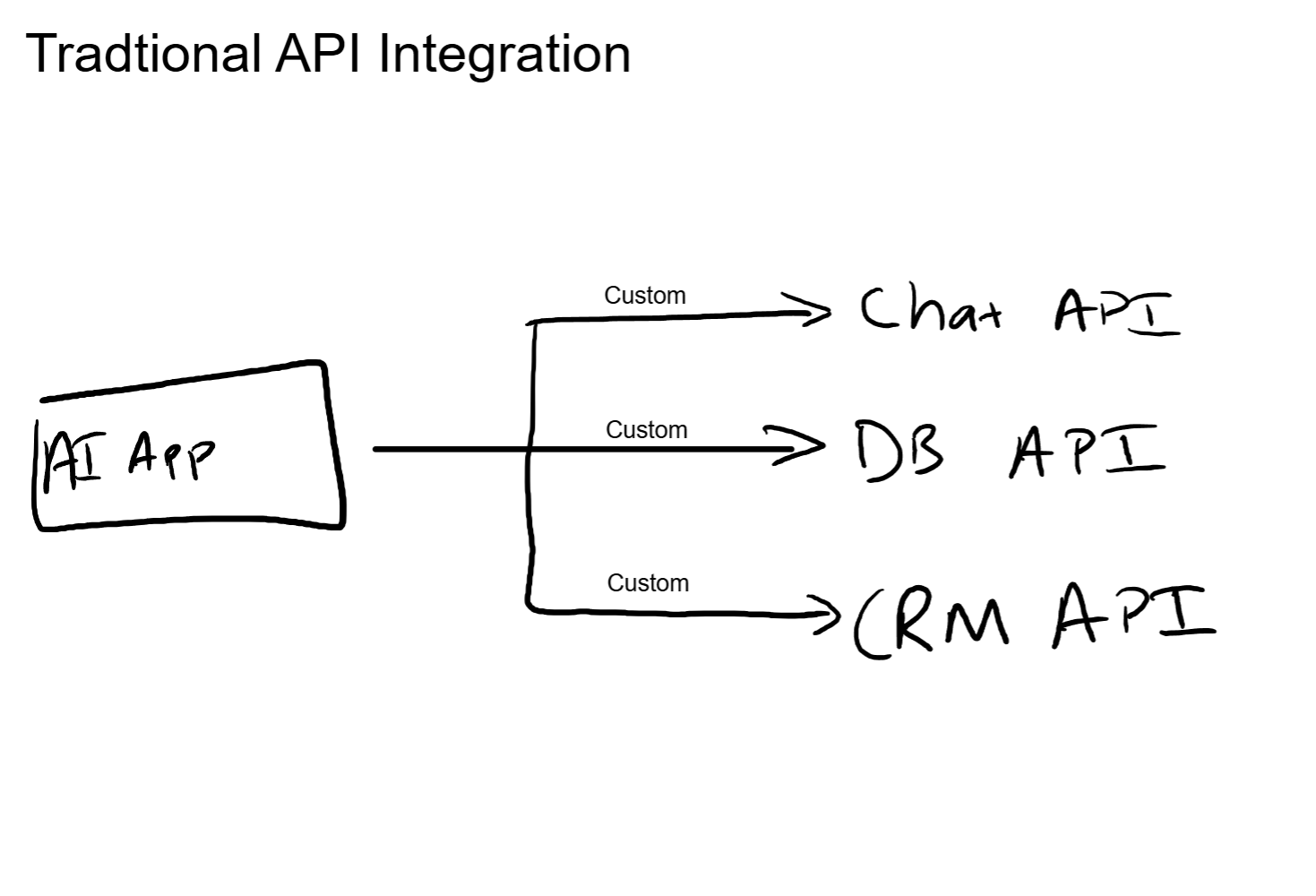

The problem is not that REST APIs do not work. They do. The problem is that every API integration is a custom job. Different endpoints, different authentication schemes, different request formats, different error handling. For a developer connecting an app to three APIs, that is manageable. For an AI agent that needs to dynamically select from dozens of tools based on the task at hand, it becomes an architectural bottleneck.

Model Context Protocol, or MCP, is a standardized protocol layer designed specifically for AI-to-tool communication. It changes who does the integration work, how tools get discovered, and who decides which tool to use. This post breaks down what MCP actually is, how it compares to traditional API integration, and when each approach makes sense.

How LLMs Use Tools Today

The current standard for giving an LLM access to external tools is function calling. The developer defines a JSON schema that describes each available tool: its name, what it does, and what parameters it accepts. That schema gets included in the model's system prompt. When the model determines it needs to use a tool, it outputs a structured function call that matches one of those schemas. The application layer intercepts that call, executes the actual API request, and feeds the result back to the model.

This works. Millions of applications run on this pattern. But every piece of it is hand-wired by the developer.

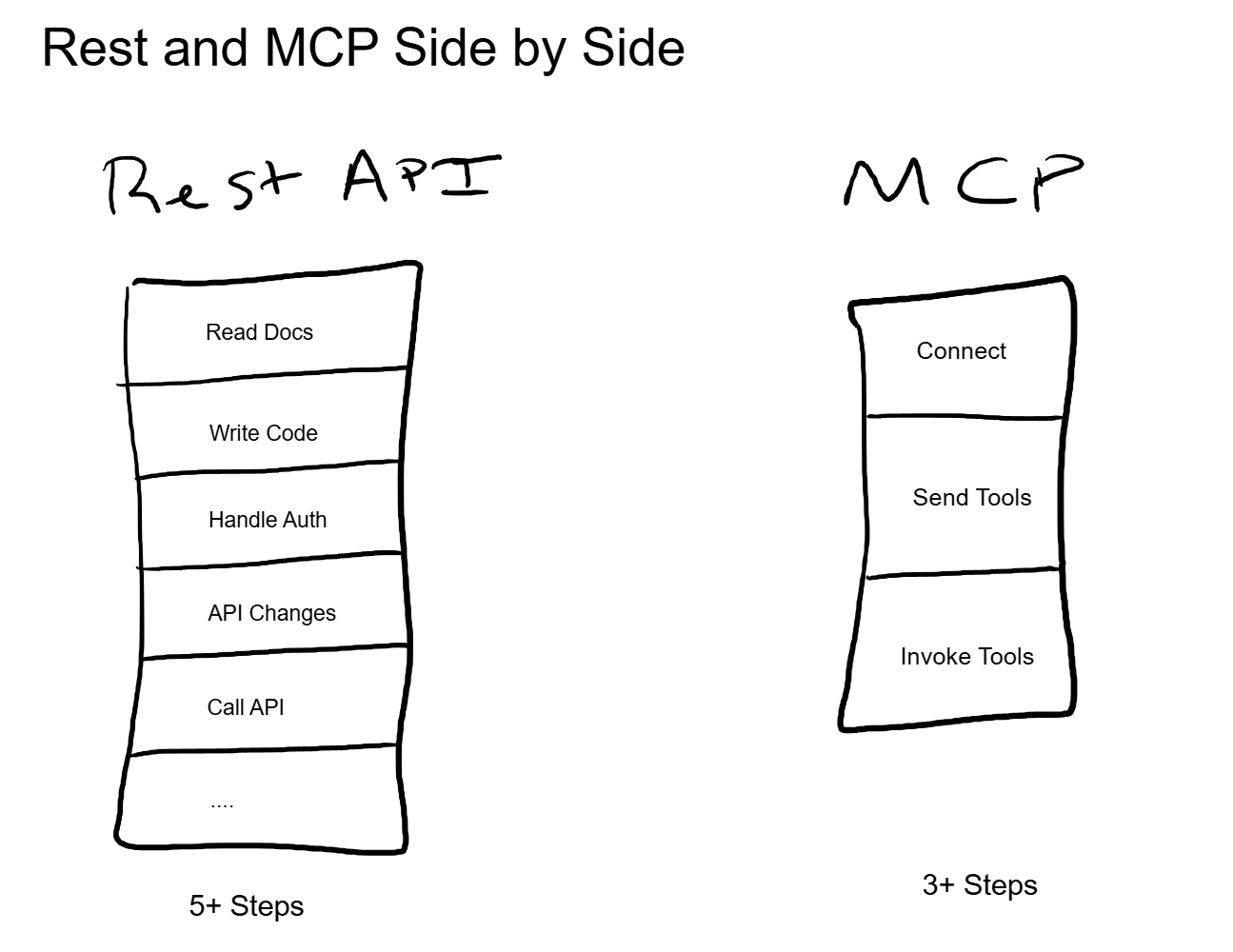

Adding a new tool means writing a new schema, integrating the API endpoint, handling its specific authentication, parsing its specific response format, and redeploying the application. Updating an existing tool means updating the schema, testing that the model still calls it correctly, and redeploying again. Removing a tool means making sure the model does not try to call something that no longer exists.

For a single-purpose application with two or three tools, this overhead is trivial. For an AI agent that needs to interact with a company's entire tool ecosystem, it becomes the integration problem that consumes most of the development time.

Why This Does Not Scale

The core issue is the N-times-M integration problem. If you have N AI applications and M tools, each application needs a custom integration for each tool. That is N times M integration points, each one maintained independently.

When an API provider changes their endpoint structure, every application that uses it needs to be updated. When a new tool gets added to the ecosystem, every application that should use it needs a new integration. When a tool's capabilities change (new parameters, deprecated fields, additional actions), every schema referencing it needs to be revised.

This is not a theoretical concern. Anyone who has built agents that call multiple external services has lived this. The agent itself might take a week to build. Keeping its tool integrations current is an ongoing operational cost that never stops.

Now consider the model's perspective. Every tool schema occupies space in the context window. An agent with 50 tools has 50 schemas loaded into every request, even if the current task only requires one of them. That is wasted context, and as covered in earlier posts in this series, context window space directly affects both cost and quality.

What MCP Actually Is

MCP, or Model Context Protocol, is a standardized protocol that defines how AI applications discover, connect to, and interact with external tools and data sources. It was introduced by Anthropic and has been adopted across a growing number of AI frameworks and tool providers.

The simplest analogy is USB. Before USB, every peripheral device had its own connector, its own driver, its own communication protocol. Adding a new device meant finding the right cable, installing custom drivers, and hoping for compatibility. USB standardized the connection: one protocol, one connector type, and the device tells the computer what it is and what it can do when you plug it in.

MCP does the same thing for AI-to-tool communication. One protocol. The tool tells the AI what it can do. The AI uses it.

The Three Primitives

MCP organizes everything a server can offer into three categories:

Tools are actions the model can execute. Query a database, send a message, create a file, run a calculation. Each tool has a name, a description, and a parameter schema. The model reads the description, decides if the tool is relevant, and calls it with the right parameters.

Resources are data the model can read. A file's contents, a database record, a configuration value. Resources are read-only. They give the model information without changing anything on the server side.

Prompts are templated instructions that the server provides. A prompt template for "summarize this document" or "analyze this dataset" with predefined structure. The client can use these templates to guide the model's behavior for that server's specific domain.

These three primitives cover the vast majority of AI-to-tool interactions. The standardization is the point. Every MCP server expresses its capabilities using the same structure, regardless of what it actually does under the hood.

Client-Server Architecture

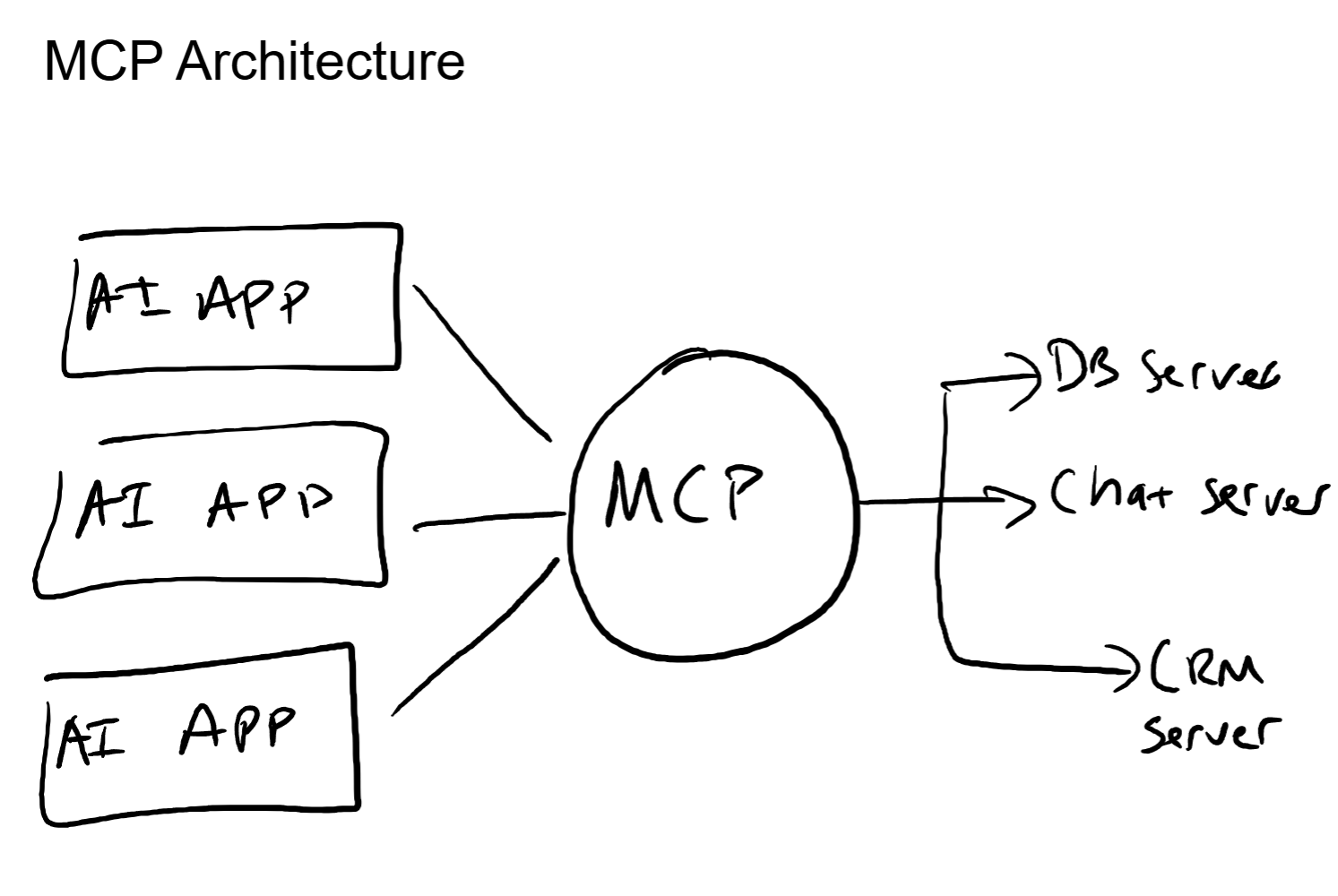

MCP uses a client-server model. The AI application runs an MCP client. Each tool provider runs an MCP server. The client connects to one or more servers, discovers what each server offers, and makes those capabilities available to the model.

Here is the key part. The client does not need to know in advance what a server can do. It connects, asks "what do you offer?" and the server responds with its complete capability manifest: every tool, resource, and prompt it supports, along with their schemas and descriptions.

The model then has a live, accurate picture of every available tool without the developer having to maintain any of it manually.

Side-by-Side: REST API vs MCP

Discovery

With REST APIs, discovery is a human activity. A developer reads the API documentation, identifies the relevant endpoints, understands the request/response format, and writes integration code. If the documentation is poor (and it often is), discovery also involves trial and error.

With MCP, discovery is programmatic. The client connects to a server, and the server declares its capabilities in a structured format the model can read directly. No documentation required for the integration itself. The server's capability manifest is the documentation.

This matters for agents. An agent using REST APIs can only use tools that a developer explicitly integrated. An agent using MCP can discover and use any tool from any connected MCP server, even tools that did not exist when the agent was built.

Schema Evolution

REST API changes break things. A new required parameter, a renamed field, a deprecated endpoint. Every client needs to be updated when the server changes. Version pinning helps, but it creates its own maintenance burden.

With MCP, the server updates its capability manifest, and the client discovers the changes on the next connection. The model sees the updated tool schema and adjusts its behavior. No client code changes. No redeployment. The server is the source of truth for its own capabilities, and the client always reads the latest version.

Multi-Tool Orchestration

With REST APIs, the application is the orchestrator. The developer writes the logic that decides which API to call, in what order, with what data. The model generates text; the application decides what to do with it.

With MCP, the model can be the orchestrator. It sees all available tools, understands their descriptions and parameters, and decides which ones to invoke and in what sequence based on the user's task. The developer builds the capability layer. The model handles the decision-making.

That is really the difference between function calling with hardcoded schemas and MCP with dynamic discovery. Function calling gives the model a fixed menu. MCP gives the model a live inventory that updates itself.

Capability Discovery: The Key Differentiator

The single most important thing MCP changes is who knows what tools are available and how that information stays current.

In a function calling setup, the developer is the bottleneck. They have to know what tools exist, write the schemas, keep them updated, and manage the lifecycle. The model only knows what the developer has explicitly told it.

In an MCP setup, the server is the authority. It declares its own capabilities. The client discovers them. The model works with whatever is available. If a server adds a new tool, the model can use it on the next connection without any developer involvement.

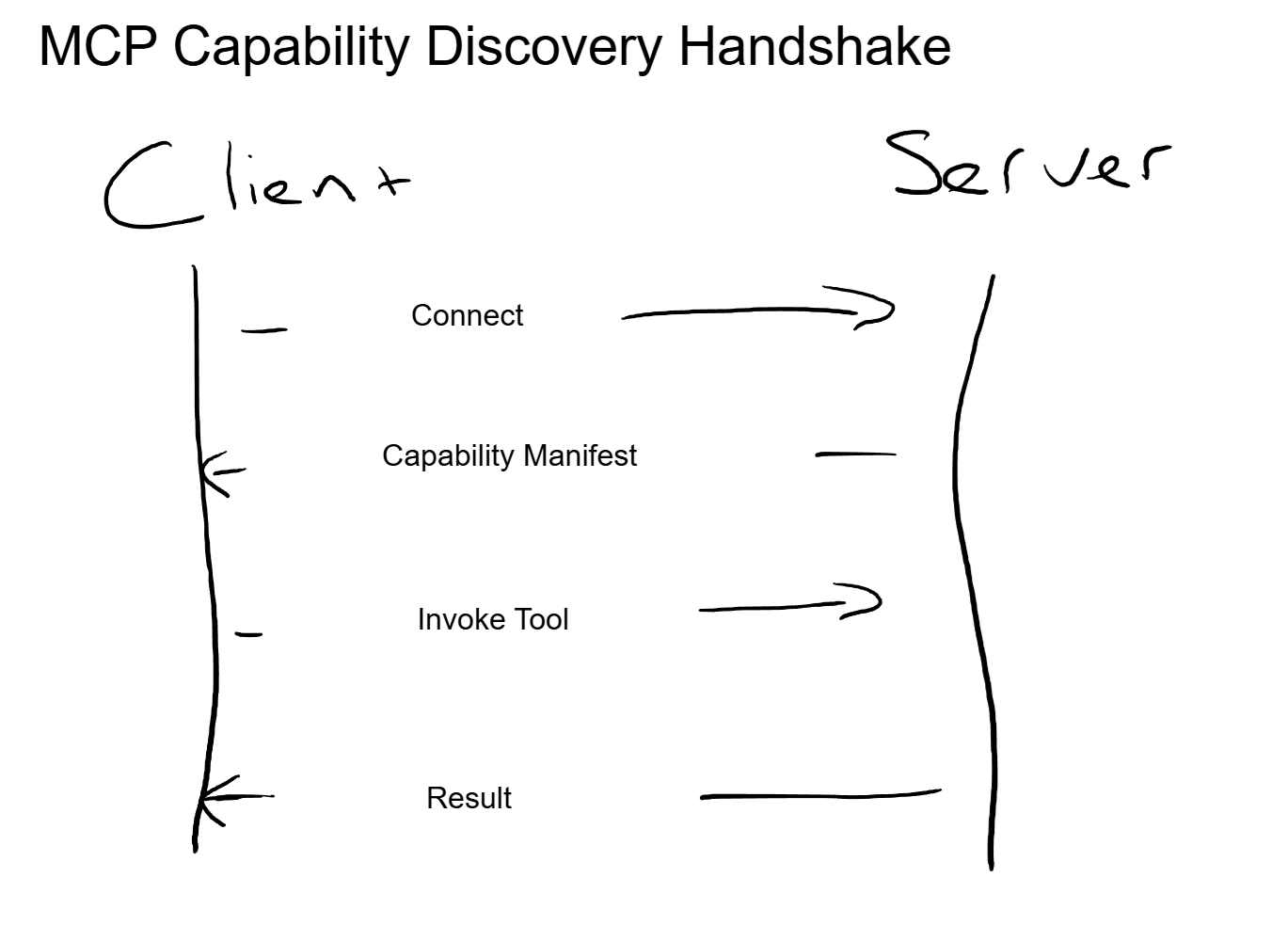

Let's walk through the handshake:

- The MCP client establishes a connection to an MCP server.

- The server responds with its capability manifest: a structured list of every tool, resource, and prompt it offers, including names, descriptions, and parameter schemas.

- The client presents these capabilities to the model as available tools.

- The user asks a question or assigns a task.

- The model evaluates the available tools and selects the relevant ones.

- The client invokes the selected tool on the server with the appropriate parameters.

- The server executes the action and returns the result.

- The model incorporates the result into its response.

Every step after the initial connection is dynamic. No hardcoded endpoints. No static schemas. No redeployments when capabilities change.

The Networking Angle

If you have spent time managing network infrastructure, MCP maps to concepts you already know.

MCP is to AI what SNMP or NETCONF is to network devices. A standardized management protocol that lets a controller discover and interact with any compliant device without per-vendor custom integration. The controller does not need vendor-specific code for each switch or router. It connects, queries capabilities, and manages the device through a common protocol.

The capability discovery handshake is LLDP (Link Layer Discovery Protocol). Devices announce themselves and their capabilities to their neighbors. The network builds its understanding of the topology dynamically, without static configuration for every link. MCP servers announce their tools to clients the same way.

The N-times-M problem that MCP solves is the same problem NETCONF solved for network configuration. Before NETCONF, configuring N devices from M management systems meant N times M custom integrations. NETCONF introduced one protocol, and every compliant device could be managed by any compliant controller. MCP does the same thing for AI tool access.

Having built systems on both sides of this (network infrastructure and AI agents), the architectural parallel is direct. The protocol standardization argument that won in networking is the same argument driving MCP adoption in AI.

When MCP Makes Sense (and When It Does Not)

MCP is not a universal replacement for REST APIs. It solves a specific problem: dynamic, model-driven tool selection across a diverse set of capabilities. Here is a simple framework for deciding which approach fits:

Use MCP when: The model needs to select from many tools dynamically based on the task. The tool ecosystem changes frequently. You are building agentic workflows where the model drives multi-step tool orchestration. You want to decouple tool provider updates from application deployments. Multiple AI applications need access to the same tool ecosystem.

Use REST APIs when: The integration is a single, well-defined API call that rarely changes. The developer, not the model, controls which endpoint gets called and when. The tool is used in a fixed pipeline with no dynamic selection needed. The API surface is simple enough that a static function schema is sufficient.

The spectrum runs from fully developer-controlled integration (REST with hardcoded logic) to fully model-controlled orchestration (MCP with dynamic discovery). Most production systems will use both, choosing the right approach for each integration based on how much autonomy the model needs over tool selection.

Key Takeaways

- MCP standardizes how AI discovers and uses tools. One protocol replaces N custom integrations. The server declares capabilities; the client discovers them programmatically.

- It solves the N-times-M integration problem. Instead of every AI application maintaining custom code for every tool, MCP makes every tool accessible through a single protocol layer.

- Three primitives cover the surface area. Tools (actions), Resources (data), and Prompts (templates). Every MCP server expresses capabilities using this structure.

- Discovery is the differentiator. REST APIs require developers to read docs and hardcode integrations. MCP servers announce their capabilities programmatically, and the model works with whatever is available.

- The model becomes the orchestrator. Instead of developers writing tool-selection logic, the model evaluates available tools and decides which to invoke based on the task.

- MCP is not a REST replacement. It is a protocol layer for a specific use case: dynamic, model-driven tool access. Simple, static integrations are still well served by REST.

Up Next

Now that you understand what MCP is and why it exists, the next post goes deeper into how MCP servers are actually built. Transport layers, security considerations, authentication patterns, and the production decisions that determine whether an MCP deployment is robust or fragile.